Machine translation: the past, the present, the future

About the history of machine translation

In 1949, inspired, among others, by advancements in the methods of cryptanalysis during World War 2, American scientist Warren Weaver wrote a memorandum entitled “Translation” where he was one of the first persons to consider the possibility of using computers to translate human languages. Although attitudes towards Weaver’s work varied from enthusiasm to skepticism, it soon led to examining the possibility of machine translation by both the supporters and the skeptics alike.

As a result of this research, the public demonstration of the first automatic translation system developed in cooperation between the Georgetown University and IBM was organised in 1954. In that event, nearly 60 carefully chosen sentences were translated from Russian into English. To do this, they had to apply the Russian sentence onto a punched card that was then entered into the Defense Calculator IBM 701 and the machine printed out the sentence in English within six or seven seconds.

Despite the great limitations of this translation system, the media considered the Georgetown and IBM’s experiment a major success – which it definitely was at that time – and the authors of the experiment announced that the problem with machine translation could be completely overcome in three to five years. As a result, the government of the USA allocated more funds into R&D in machine translation, hoping to hasten finding a solution to this matter. As we now know, it did not go quite as smoothly and the initial eagerness (along with the extra funding) was eradicated for a while.

Since then, machine translation has experienced several breakthroughs as well as setbacks and utopic headlines in the media have regularly alternated with immediate disappointment and suspicion as to whether high-quality fully automatic translation is even possible. Next, let us have a closer look at a couple of major developments in the history of machine translation and examine, how to distinguish the current hype from hope, i.e. mere marketing razzle-dazzle from actual future prospects.

Rule-based machine translation

Until now, there have been three main approaches to machine translation systems. The first is rule-based machine translation which means describing the grammar and semantics of the relevant language as precisely as possible and creating links between the two languages. In essence, this means preparing automatic dictionaries / grammar programmes where the elements of one language are matched with the other language’s elements based on programmed language rules.

The greatest strength and weakness of this system lies in its inflexibility: each rule must be written down separately, including all the possible letterings, contextual meanings, exceptions, etc. which requires extremely costly and time-consuming programming. However, since the grammar rules for texts from different fields are generally the same, only the rules for word choice need to be changed to adapt the system to them. In addition, it does not require any text corpora and is therefore well suited for translating smaller languages or at least taking the first steps in this endeavor.

The system used in the Georgetown-IBM’s experiment was also a rule-based machine translator. This was based on only six rules, whereby its developers predicted that approximately 100 rules would be required to successfully translate any scientific Russian texts into English. We now have systems which use tens of thousands of rules and the results are still far from being generally useable. Besides, the more rules a system has, the more complicated it is, increasing the likelihood of internal discrepancies and new errors occurring.

Statistical machine translation

Warren Weaver described the principles of statistical machine translation already in 1949 in his aforementioned publication but these did not get applied before the end of the 1980s, as there was previously a lack of the necessary bilingual text corpora and technical capability. Namely, statistical machine translation is based on the statistical analysis of bilingual parallel corpora (collection of texts on the same subject written in different languages). This means that the machine provides translations based on how frequently a relevant matching word or phrase in the other language is used in the corpus in the place of a word or phrase written in the first language.

Both Google and the European Commission (among others) used to use SYSTRAN’s rule-based translation system, but finally transitioned to the statistical system (in 2007 and 2010, respectively). The main reason behind this is that statistical systems are cheaper – the majority of the work is done by the machine, not the programmer – in-depth knowledge of these languages is not required, they produce more natural-sounding translations, since they are based on texts prepared by humans, they can be used in many languages without major changes required, and they basically improve themselves as the corpora increase.

The downsides of statistical machine translation are the need for large corpora (which are still not available in many languages), thematic limitation (if a corpus includes texts from several fields with different use of language, the results become inconsistent), and difficulties when translating languages with very different structures and morphologically complex languages. For example, in an article in the journal Õiguskeel, translator Ingrid Sibul noted that evaluations of statistical machine translation conducted in the European Commission revealed that the system used there provided the best results when translating from English into Portuguese and Spanish and the poorest results when translating into Estonian, Finnish and Hungarian.

Neural machine translation

The newest approach to machine translation is neural machine translation (NMT). Although NMT also uses language corpora and statistical calculations, neural machine translation engines are based on artificial neural networks. You could say that artificial neural networks are trained to translate – they are programmed to search for patterns from the texts provided (somewhat similarly to the human brain) and then provide translations themselves based on the patterns they detect. Unlike statistical translation systems, NMT engines look at the entire source sentence instead of individual phrases, providing more grammatically correct and fluent translated sentences.

With just a few years, NMT systems reached the level that the statistical translation engines had taken decades to achieve and by the end of 2016, most of the best machine translation providers, including Google, Microsoft, SDL, etc. had switched to neural translation. By the end of 2017, neural translation was also introduced by the European Commission. However, even though various comparisons and assessments have indicated the superiority of the latest NMT engines over statistical engines in many fields and language pairs, this system does not come without its downsides.

First, neural translation also requires a lot of source data – several studies have indicated that even more than statistical systems, since the machine is unable to find the right patterns without it. Second, neural systems tend to sacrifice accuracy over fluency, leading to more contextual and terminological errors than when using statistical translation engines. However, the readers may not notice it since the translation seems so natural. Neural machine translation has been said to “hallucinate” translations, for example by translating Ahvenamaa (Åland in Estonian) into Russia (Venemaa in Estonian), since it is more similar with the latter. And finally, since NMT systems are closed so-called black boxes that construct their “world view” themselves and invisibly to a large extent, it is much more difficult to correct their work as it is basically impossible to ascertain the sources of mistakes.

Hybrid systems

Since the aforementioned approaches all have their strengths and weaknesses, more and more experiments with combining them are being conducted, for example by pre-or post-processing neural translation with a statistical translation engine or vice versa. In the right circumstances, it has helped to improve the translation outcomes, however this also means that the system becomes more complicated. In addition, subjecting translations to additional rules or statistics limits the general usability of this solution, therefore leading to better results in only very narrow fields with a specific style and terminology. Such solutions are provided for example by the language technology company Omniscien Technologies on their machine translation platform Language Studio.

Current situation in machine translation

Therefore, in professional context, machine translation is currently suitable for use in narrow fields where the use of language is very uniform and unambiguous such as weather reports, certain legal texts, tables of technical data, etc. And even then, the texts are usually reviewed by a human translator or language editor. It can generally be used as a way to increase productivity, not as an independent translation tool.

However, machine translation does not have to be perfect to be useful. There are currently dozens of general machine translation applications on the market which translate both texts and speech, including the well-known Google Translate, Skype Translator, Baidu Translate, etc. Although these translation systems make major and ridiculous mistakes due to their wide scope, they still enable to comprehend the general meaning of the translated message. This may be of great help in situations such as understanding a person speaking a foreign language in communication networks, learning a foreign language independently and also when travelling alone in a strange country, since many of those services are integrated into mobile devices.

A look behind all the hype

Since the opportunities to use machine translation are ever increasing, this has become a profitable global business. As a result, this topic comes with a lot of marketing hype that becomes even more amplified in the media due to incompetence and clickbaiting. In his Master’s thesis completed in the Copenhagen University, Mathias Winther Madsen has provided a great overview about the complexity of assessing the actual level of machine translation systems.

Among others, he mentions that research in machine translation is often done in great secrecy, the evaluation methods for the systems have several shortcomings, the background of the evaluations themselves is often not explained and achievements tend to be magnified and shortcomings diminished due to fierce competition. Several examples of this could be found from last year (2018) alone. For example, in March this year, the media was buzzing about how Microsoft had managed to develop the first machine translation system for Chinese with translation quality equal to that of humans.

First, it should be noted that texts were only translated from Chinese into English – there is a tremendous amount of data available for both languages to develop the systems – and only general news articles were translated using the Microsoft system. Several linguists also soon expressed their skepticism, among others critiquing the fact that people who were not translators were employed to evaluate the quality of the translations and the translations were evaluated by single sentences not as a comprehensive text (which is still a major weakness for machine translation). It was concluded in the blog of the market research company Common Sense Advisory that it would be more accurate to say that “in highly artificial conditions, machine translation is now on par with low-quality human translation”.

Other major news arrived in October when the Chinese tech giant Baidu announced that it had developed STACL, a system providing simultaneous interpretation, interpreting speech in real time from English into German and from Chinese into English. Previous machine interpreting systems have been translating text one sentence at a time. Apparently, the Baidu system enables to choose, how many words it waits for the person to say before it starts interpreting to increase accuracy, and it is able to predict upcoming text based on what it has heard to overcome problems with different sentence structures in languages.

The media was very enthusiastic about it and even suggested interpreters should start updating their CVs but although it was definitely a step in the right direction, this achievement was not nearly as groundbreaking as it was made to appear. As was summarised in an article published by the language industry intelligence company Slatori, STACL’s output quality is below the current state-of-the-art even in the case of a long five-word waiting period and the system is not able to later correct its possible (and let us be honest, unavoidable) predictions. On the other hand, such systems will make the extremely costly interpreting services more widely and affordably available in the future. However, both of the aforementioned examples could act as a warning that you should use caution regarding great breakthroughs published in the media.

Can machine learning replace human translators?

In its nearly 70 years of development, machine learning has come quite far. So far in fact to make even the most pessimistic among us think that it should not take more than ten years or a couple of decades at most to completely solve the problem with machine translation and eradicate the profession of translators completely. Especially now that artificial neural networks and deep learning technologies – which may seem like complete utopia at first glance – have entered the field. However, neither statistical nor even neural machine translation is as novel or magical as it may seem: the first principles for their functioning were already written down in the 1940s.

And as Google’s machine learning researcher François Chollet said in an article published in Wired: a human cannot be completely replaced by merely feeding data into a machine and increasing the number of layers of neural networks. The translation process is too complex for this. Whether a machine translation system looks at a word, phrase or sentence at a time, it will never be enough. This is because a (good) human translator also considers the “hidden information” when translating: the author’s attitude, connections between sentences, the text as a whole, the text’s position in the society – i.e. both the cultural context and objective of the text – and all his/her previous experiences and knowledge. The translator basically uses his/her interconnected world view that is based on being a member of the society, navigating in it and learning to understand humans, and does not only rely on statistics or general patterns. And if necessary, the translator will search for additional information.

Even if a software system contains entire encyclopedias, it can never understand the relations between its entries like a human mind can, as it takes much more than recording numbers and words. Therefore, as Tilde’s language technologist Martin Luts said in an interview with Linnaleht: “As long as a machine is not able to feel ashamed for its translation, machine translation will not be good enough.”

What is the likely future of machine translation?

Advancements in technology and societal impact

As we mentioned before, machine translation does not have to be perfect to be useable. For example, according to recent data, the currently highly imperfect Google Translate is used to translate nearly 143 billion words every day. There is definitely no stopping the development of machine translation systems and several minor problems can be overcome by just combining or optimising the existing solutions. The amount of available data for even the smallest languages is increasing every day.

As a result of advancements in machine translation and developments in ecommerce, international communication, trading and tourism, machine translation can be expected to continuously spread into communication networks, camera and audio recording applications of smart phones, online stores, etc. One could even speculate that in a world where everyday language barriers can be overcome with just the push of a button, firstly, foreign language proficiency could begin to decline and secondly, if the fluency of machine translations does not improve fast enough, yet the need for them is ever increasing, they could even start affecting the natural use of language by humans.

The future of translation work

The development of machine translation will no doubt have an impact on the work of translators and translation agencies. More straightforward commercial texts such as user manuals and mass-produced documents can be expected to be translated by machines more and more. It is not likely, however, that there will be no human supervision over them, but translators will act more as language editors and should gain a better understanding of the way machines think to identify their shortcomings and be able to react to them.

Since machine translation will at least for a while be able to provide the best results in a very narrow field, future translators must have an even better understanding of various systems than currently and be able to choose and apply them based on the field of the translation project, thus taking on the role of technology. However, human translators can definitely not be replaced for texts that require perfect translation. The same goes for creative and poorly constructed – unconventional and therefore incomprehensible for machines – texts. As was said in the aforementioned blog of Common Sense Advisory, this means that only a translator who translates like a machine should be worried about machine translation.

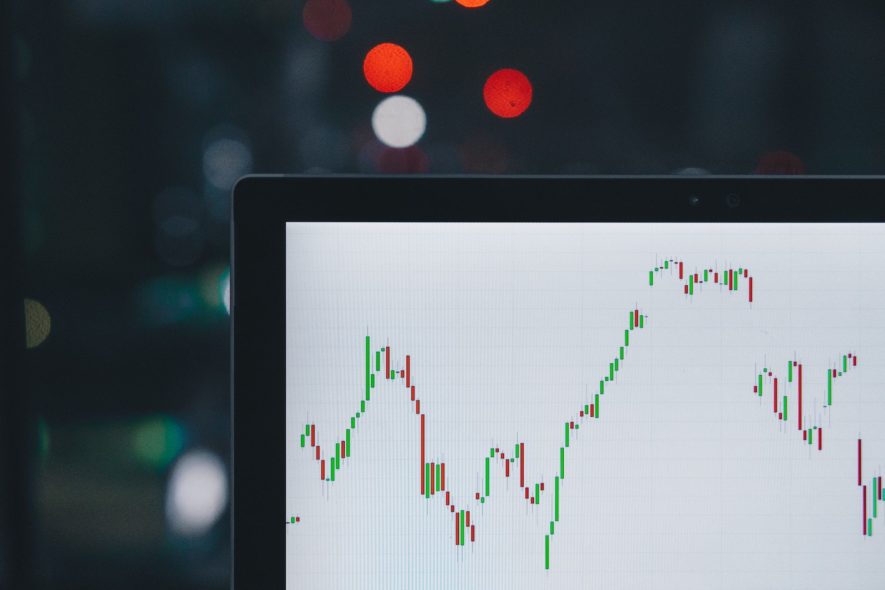

The future of the translation services market

Does it mean then that translators will have less work as the quality of machine translation improves and it becomes more widely used? Even if machine translation cannot achieve everything, one could assume that if it increases a translator’s productivity, meaning that one translator is able to do more work than before with the help of machine translation, there will be need for less translators. At least for now, the actual trend is completely the opposite. Translation services becoming more affordable and available and translations being provided faster has only increased demand. In 2014, the USA business magazine Inc. chose the translation services sector as one of the best fields to start a business in and as at 2018, Common Sense Advisory predicts that the sector will continue to grow.

Among other things, this growth has been facilitated by globalisation and ecommerce development that has been amplified by cheaper and more available language services with better quality. In turn, ecommerce and other Internet services have given rise to website localisation services which require quite creative work that machine translation has not had much success with yet. Advancements in machine translation make translation services more affordable and available and increase the turnover rate which will definitely open up many more doors. However, as it is generally not yet clear whether new doors will open up faster than old ones close in the long run, machine translation is definitely not coming after the job of good translators in the upcoming decades, especially in the Estonian language.

Check out these other posts from our blog

Technical translation

Edited translation or unedited translation – that is the question